If you wish to execute the pipeline on another binary classification dataset, you can refer the tutorial.ipynb for instructions on how to setup the environment and run the pipeline.

During my work on a cold-start regression problem, conventional machine learning models underperformed on the dataset. I experimented with Chain-of-Thought (CoT) prompting, reasoning that the LLM’s pre-training data relevant to the domain could serve as an inductive bias. The LLM outperformed traditional models, but with a clear limitation: its pre-trained knowledge captured global domain-specific patterns while remaining blind to local patterns within the dataset. This gap prompted a question: could XAI attributes from an explainable tree-based model serve as hints to ground the LLM’s reasoning in local, dataset-specific patterns?

Pipeline Architecture

Figure 1: End-to-end pipeline workflow.

The pipeline ingests inputs for all components simultaneously. This includes the dataset configuration, an untrained tree model, the hyperparameter grid, and configurations of the three LLMs. First, the explainable tree-based model is trained and tuned. XAI attributes are extracted using the feature_importances_ attribute and SHAP’s TreeExplainer and stored. The train-test split, model predictions, feature importances, and the path to shap_values.csv are logged in config.json to ensure consistent data splits for a fair comparison.

Next, the shap_values.csv is clustered to identify distinct decision-making patterns, thereby improving context window management. The medoids of each cluster are passed to the Reason Generator, which converts numerical SHAP values into natural language reasoning. This reasoning is sent to the Objective Judge and ICL Classifier separately. The Objective Judge evaluates the Reason Generator’s output for reasoning quality based on custom rubrics. The ICL Classifier treats the previously generated reasoning as the reasoning in a CoT prompt and returns a detailed thought process, conclusion, and final prediction. The Evaluator retrieves the ground truth from config.json and the ICL Classifier’s predictions, then computes macro-averaged F1.

Both the deterministic and non-deterministic metrics are stored in metrics.json.

Design Decisions

Model Choices and Inference

The pipeline uses one tree-based ML model to learn local patterns and extract XAI attributes, and three LLMs, one per component. Any tree-based model compatible with SHAP’s TreeExplainer works here; the choice is left to the user.

The three LLMs were deliberately chosen from different model families to eliminate self-preference bias, a known failure mode where a model scores its own outputs more favorably than outputs from other models. By ensuring no component evaluates its own outputs, the pipeline structurally guarantees independent assessment. Each model was matched to its component based on two axes: task type and request volume.

-

ReasonGenerator processes a small number of requests, one per cluster medoid. Since volume is low, quality takes priority. DeepSeek-R1 from Together AI is a reasoning-first model, purpose-built for structured chain-of-thought generation, making it the right choice for converting numerical SHAP values into coherent natural language.

-

ObjectiveJudge also processes a small number of requests. Here, calibration matters most; the judge must score reasoning reliably without being sycophantic. Claude Haiku 4.5, from Anthropic, is well calibrated for rubric-based assessment at low volume and cost.

-

ICLClassifier processes 1,000+ requests, one per test sample. At this volume, cost dominates. Gemini 2.5 Flash from Google Vertex AI offers strong performance at a price point that keeps the pipeline affordable at scale.

All three components use batch inference, since the pipeline targets evaluation and benchmarking rather than real-time serving.

Diverse Example Selection

Cluster medoids are chosen as representative examples rather than centroids. Centroids are geometric averages that may not correspond to any actual data point in the training set, whereas medoids are guaranteed to be real training examples. The silhouette score is used to determine the optimal number of clusters, K, rather than the elbow method, since it yields a clean scalar metric that lends itself to automation without manual inspection.

Experiment Design

Scope and Datasets

The pipeline is useful in cold scenarios where a ML model does not perform well. Therefore, as part of the evaluation, four open-source binary classification benchmark datasets are used: titanic (n=891), pima diabetes (n=768), loan approval (n=4269), and mushroom edibility (n=8124).

Ablation Studies

Two ablation studies are carried out as a part of the evaluation:

- Is the performance because of CoT alone or SHAP?

- Is the performance driven by context provided by column metadata or SHAP?

The first ablation study compares the pipeline against zero-shot CoT to isolate the contribution of SHAP. The second masks column metadata to isolate the contribution of semantic context. It keeps data values intact but encodes categorical columns to prevent the LLM from reverse engineering column names from their values (e.g., a column with values ‘male’ and ‘female’ would reveal ‘gender’ even if the column name is masked).

Evaluation Rubrics

The pipeline is evaluated based on the quality of the reasoning generated by DeepSeek-R1 and classification performance of the ICLClassifier. The classification performance is evaluated based on macro-avg F1 score to account for class imbalance across the datasets. Unlike classification, the reasoning evaluation does not have a ground truth to compute deterministic metrics. Hence, the Objective Judge is used to evaluate the quality of the reasoning based on the following rubrics:

- Faithfulness: How accurately does the reasoning reflect the sign (direction) and relative magnitude of the SHAP values?

- Consistency: Does the reasoning focus on the features with the highest absolute SHAP values (most influential features) and prioritize them correctly?

- Coherence: Is the reasoning grammatically sound, well-structured, and easy for a non-expert to understand?

Experimental Results

Qualitative evaluation of reasoning generation

To illustrate the internal logic of the system, here are a few representative reasoning traces generated by DeepSeek-R1

Example 1: One instance from the Loan dataset (unmasked)

The model correctly predicted loan rejection (0) primarily due to two “critical negative factors: the applicant’s extremely low CIBIL score of 353 (SHAP: -4.43) and a 10-year loan term (SHAP: -2.65). These features—globally the most important according to the model—strongly pushed the prediction toward rejection. The CIBIL score’s substantial negative impact reflects its role as a key creditworthiness indicator, where 353 falls far below acceptable lending thresholds. The loan term’s large negative contribution suggests longer durations heighten default risk. Although the high loan amount (SHAP: +0.21) and valuable commercial/residential assets (SHAP: +0.14 and +0.08) provided positive signals, they were insufficient to offset the dominant negative drivers. The applicant’s annual income (₹66 lakhs) further supported rejection with a negative SHAP value (-0.26), while other features like self_employed status or asset values had negligible effects. Cumulatively, the SHAP values sum to approximately -6.92, decisively steering the prediction toward rejection (probability <0.5) by outweighing all positive contributions.

Example 2: One instance from the Titanic dataset (masked)

The model correctly predicts class 0, aligning with the ground truth label of 0.0. The SHAP values reveal that feature x2 (value: 1.0, SHAP: +1.38) exerts the strongest positive influence due to its high global importance (0.533), pushing the prediction toward class 1. However, x1 (value: 3.0, SHAP: -1.22) counters this with a substantial negative contribution, nearly neutralizing x2’s impact. The remaining features show modest effects: x3 (+0.30), x4 (+0.13), and x7 (+0.30) contribute positively, while x5 (-0.10) and x6 (-0.78) contribute negatively. Cumulatively, the net SHAP sum is a slight positive (+0.017). Given that the base value (average model output) is typically negative for class 0 in binary classification, this minor positive shift is insufficient to raise the logit above zero. Thus, the prediction remains class 0, driven primarily by x1’s strong negative influence and x6’s additional downward pressure, outweighing the positive contributions from other features.

Quantitative evaluation of reasoning generation

| Dataset | Number of clusters | Faithfulness | Consistency | Coherence |

|---|---|---|---|---|

| Titanic | 10 | 4.75 | 4.65 | 4.75 |

| Pima Diabetes | 2 | 4.75 | 4.75 | 4.75 |

| Loan Approval | 2 | 4.5 | 4.0 | 4.75 |

| Mushroom | 53 | 4.72 | 4.63 | 4.74 |

Table 1: Quantitative evaluation of reasoning output using Claude Haiku 4.5 as an Objective Judge (max=5 and min=1, higher the better)

The objective evaluation shows consistently high reasoning quality across all datasets, with scores ranging narrowly from 4.0 to 4.75. Coherence stands out as the strongest aspect, with a score of about 4.75, indicating that DeepSeek-R1 excels at generating grammatically correct and well-structured explanations that are understandable to non-experts. The metrics for consistency and faithfulness show a noticeable decline for Loan Approval, suggesting that under a token limit of 4096, it is difficult to represent all influential features, especially when the dataset has a large feature space and the decision outcome is highly nuanced.

Performance comparison with ML and LLM baselines

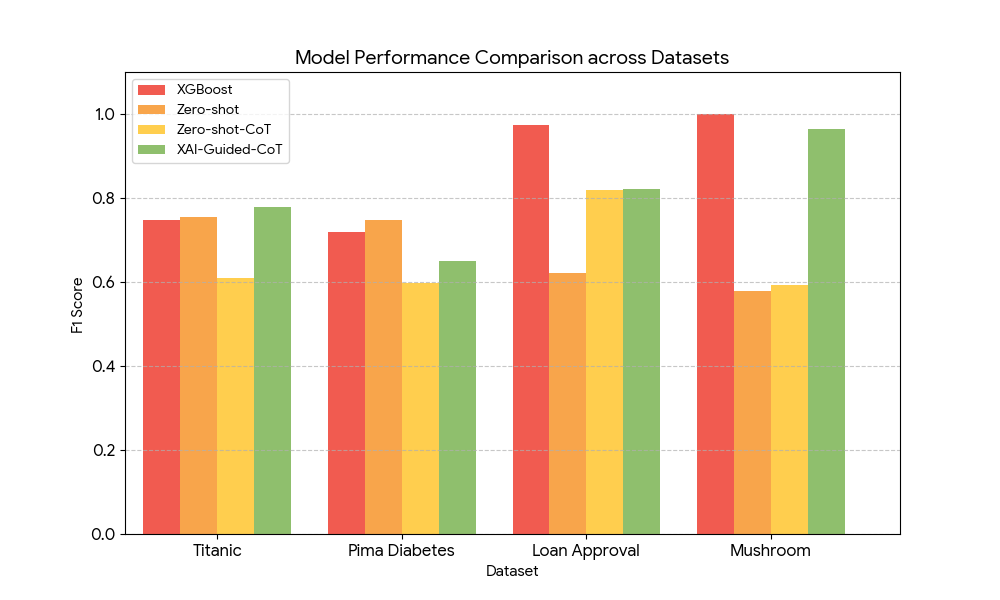

Figure 2: Comparison of the XAI-Guided-CoT with ML (XGBoost) and LLM baselines (zero-shot, zero-shot-cot).

Figure 2: Comparison of the XAI-Guided-CoT with ML (XGBoost) and LLM baselines (zero-shot, zero-shot-cot).

The plot presents a clear story. XAI-Guided-CoT reduces most of the gap between the zero-shot baseline and XGBoost on two of the four datasets: Loan Approval and Mushroom. Both datasets involve complex scenarios where generic domain knowledge is not enough, and local patterns matter. On the other two datasets, the zero-shot baseline performs surprisingly well, because it relies on memorized prior information rather than reasoning. For example, the Titanic dataset covers an event that is well-known, and most passengers perished. Therefore, the zero-shot baseline simply predicts the majority class without understanding the data.

The first ablation study confirms that the improvements aren’t solely due to CoT. Zero-shot CoT actually underperforms the zero-shot baseline on the Titanic dataset. This is because unstructured reasoning without any grounding can deviate from dataset patterns rather than improve them. The improvements specifically stem from SHAP-based reasoning and not from asking the model to think step by step.

Does column metadata provide context for CoT?

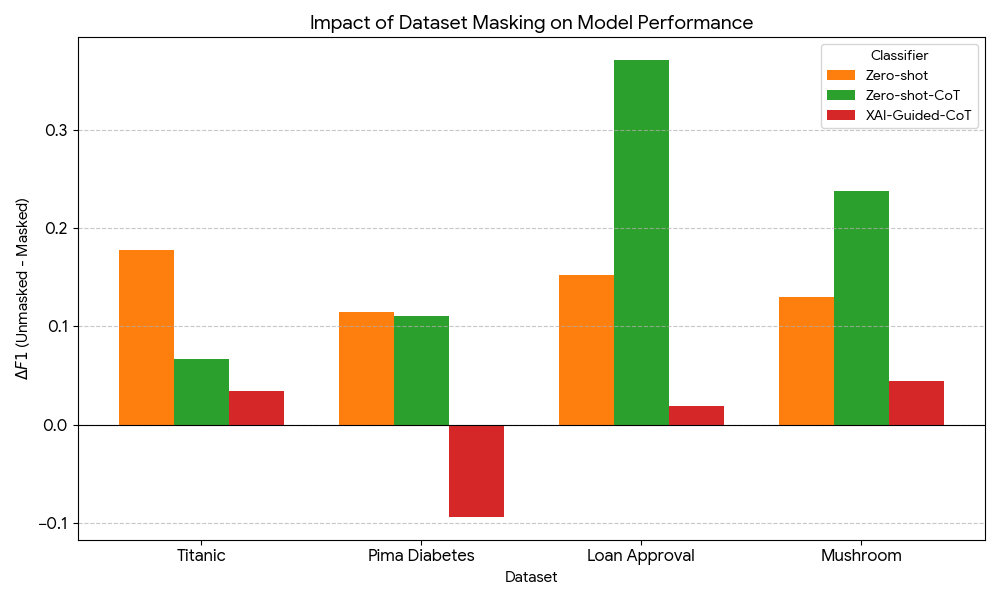

Figure 3: Comparing performance of prompt engineering approaches before and after masking the column metadata.

Figure 3: Comparing performance of prompt engineering approaches before and after masking the column metadata.

The plot depicts the change in the model performance after masking the column metadata. The quantity plotted on the y-axis is the difference between performance on unmasked dataset and performance on masked dataset. Masking column metadata substantially reduces performance across all the methods highlighting that semantic context embedded in feature names plays a key role in LLM predictions. Nonetheless, XAI-Guided-CoT retains 92-96% of its original performance and offers substantial advantages over baseline approaches: +0.168 on Titanic, +0.110 on Pima Diabetes, +0.334 on Loan Approval, and +0.473 on Mushroom. This demonstrates that SHAP-based reasoning provides genuine predictive value independent of domain knowledge embedded in feature nomenclature.

Reflection

- The core hypothesis held strongly. The local patterns learned by XGBoost transferred meaningfully to Gemini 2.5 Flash resulting in a 96% performance parity. Moreover, the quality of the reasoning generated by DeepSeek-R1 was consistently high across all the datasets despite varying complexity and domains. Interestingly, the XAI-Guided-CoT retained 92-96% of its original performance demonstrating that SHAP-based reasoning provided meaningful predictive value independent of semantic context.

- The performance on Pima diabetes dataset was surprising. This dataset is known to have biologically implausible zero values for blood pressure, likely encoded nulls whose interpretation is undisclosed in the original source. This ambiguity propagated into natural language reasoning which in turn confused the ICLClassifier bringing down the classification performance. However, when the column names were masked, the natural language reasoning was properly generated because the LLM did not know the column corresponded to blood pressure, leading to a very similar performance compared to XGBoost. This suggests that correct reasoning at one stage can introduce confusion at the next when the downstream component lacks the context to resolve the ambiguity.

- If I were to run the experiments again, I would tune the token limit for the ReasonGenerator and measure its effect on the consistency and faithfulness metric. A higher limit would allow the model to represent more influential features in the reasoning, potentially reducing the variability observed due to high cardinality datasets such as the Loan Approval dataset.

Can explainability attributes from a tree-based model guide an LLM’s reasoning on tabular data? The results suggest yes but with important caveats. SHAP-grounded reasoning outperforms generic CoT on complex tasks, but the pipeline is only as reliable as the signals it reasons over.